Update: This post comes later than my usual Friday morning drop, and this is because I kept writing and re-writing, wanting to put something out there that is truly valuable.

To make it up, this week you will get an article on Wednesday and Friday, as part of the same topic: Designing Developer Surveys.

This will be a three-part series looking at the purpose of surveys, when we need them, how to design them, and how to analyse the data once we've got it.

Before diving into why and when we need Developer Surveys, I want to make a critical caveat that I will repeat a few times throughout this series.

Where possible, you either need to get a good survey tool or help from proper Researchers to design it, or both. Framing the questions (and the answers if you have any multi-choice questions) is crucial for data accuracy.

Please only start sending surveys out if you are sure how to do it. Otherwise you will end up with inaccurate information and you risk to create survey fatigue among the developers.

If you don't have any of those available, I hope this guide will serve as a starting point, but you should do some research beforehand nonetheless.

With that in mind, let's consider the purpose of surveying and when you need it.

When conducting surveys, the primary goal is to gather insights and feedback from a target audience. These responses can provide helpful information that can be used to improve your company's products or services be it internal or consumer-facing.

It's important to note that research isn't necessarily about discovering something entirely new. Instead, it can also be a means of gaining a deeper understanding of a topic or issue that is already known or confirming a hypothesis. By conducting surveys, you have the opportunity to gather data that can help you make informed decisions and improve your overall product strategy.

In academia, we call this primary (when you want to find out something new) and secondary (when you want to validate existing hypothesis) research. Secondary research is essential when doing all the preparatory work before your primary research since it massively reduces the risk of reinventing the wheel by not knowing if it has been done before. Something to also bear in mind when framing your research question(s).

There are three fundamental steps before going ahead and designing the surveys. This is my take on it, but your circumstances might differ, so don't take it as a mandated framework.

Setting out your survey objective

To effectively design and conduct a survey, it is important to define the specific goals and objectives of the data collection. In this case, it is crucial to determine what you want to learn from developers.

Do you seek feedback on existing tools that they use, identify pain points, or assess how they feel about company culture or specific processes?

By being clear on your objectives, you can guide the survey creation process and ensure that you can collect data that is relevant to your main research question.

Secondly, once you clarify your objectives, make sure you prioritise them. If you have multiple objectives, prioritise them based on their importance or impact on your product roadmap. This will help you get the most value, reduce survey fatigue and ensure a solid internal feedback loop.

With that in mind, let's talk about the second key step.

Understand the context and targeted audience

Once your objectives are set, consider the broader context in which the survey will be conducted.

Are there any ongoing initiatives, upcoming releases, or changes in your product or platform that might influence the survey results? Have any changes in leadership or strategic direction happened?

Understanding the context will help you interpret the survey findings more effectively and consider whether it's the right time to survey people.

In addition, consider the demographics of your target audience. In layman's terms - who are your users? A helpful tool for this is to segment your users' personas and discuss them in detail with your team.

I will only encourage you to send out a survey if you have a clear picture of your users, their skill levels, motivations, and pain points. This is particularly relevant for developers as you have different types of devs, and naturally, they care about different things.

This information can be used to tailor your survey questions and ensure that you can collect data representative of the user group you are surveying.

Tip: I usually pilot-test the surveys with a small sample of respondents both in my PM'ing role and my part-time academic career. This has helped me in the past to identify any potential issues with the survey design or wording and make adjustments as necessary. There is a risk of confirmation bias here, which I will touch upon in Part two.

The above is a take-it-or-leave-it advice, but it can be valuable depending on your context.

Involve Stakeholders

I can't write something for PMs without mentioning the wholly word: stakeholders.

Engaging with relevant stakeholders, such as other product managers, developers, or execs, is essential to gather their input on the objectives.

They may have additional perspectives or insights contributing to the survey's goals.

Collaborating with stakeholders also helps ensure buy-in and support for the survey process.

You should be sure not to turn this into a survey design committee but instead get their feedback on objectives and timelines for the survey to ensure it doesn't clash with other company-wide surveys. Finally, they can be your champion and help you with engagement and responses.

By taking these steps, you'll have a solid start for creating a meaningful survey that will provide valuable insights and help you achieve your objectives. Moreover, you can evaluate the survey's success by measuring how well you've completed your goals.

Create a simple cheat sheet with the above steps so you can visualise them before formulating your research questions and designing the survey.

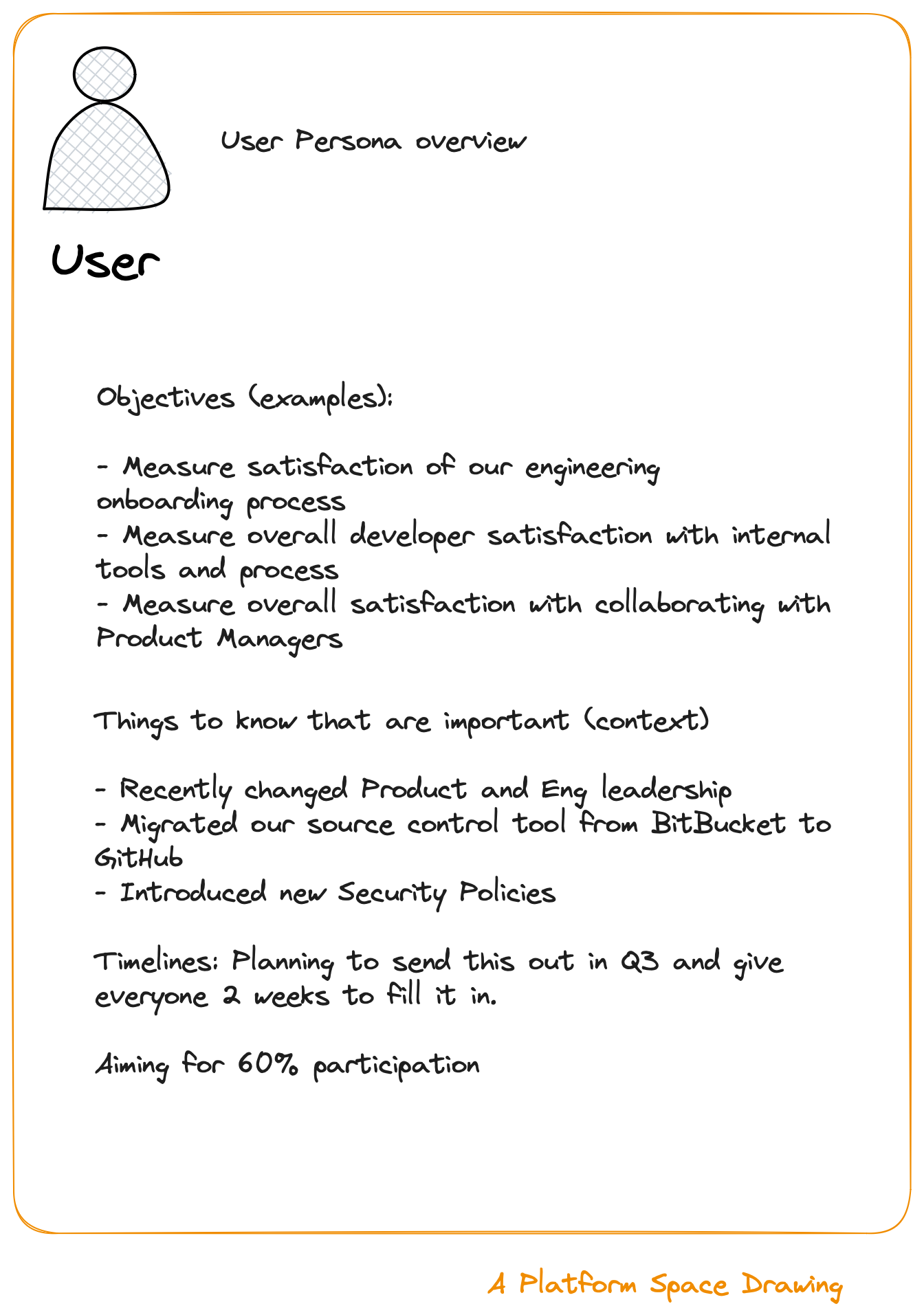

Something like this:

Finally, before discussing designing the questions for the survey, one last important thing to mention is timing.

The timing of your survey should strike a balance between gathering timely feedback and giving developers sufficient time to engage with the product or process.

Avoid sending surveys too frequently because it may lead to survey fatigue and lower response rates. It's also essential to consider the availability and workload of developers, ensuring that the survey doesn't coincide with critical deadlines, other demanding periods, or any creative time they have for themselves.

I know! It's not easy, but it's doable, and being mindful of their time will help you in the long run.

Ultimately, the ideal timing for sending developer surveys will also depend on your specific circumstances and objectives.

I hope you found this useful and I will be back on Friday with more Developer Surveys content!

Nice one! Looking forward to the other parts